Launching AI with confidence: why experts need to sit at the heart of product development

By Ryan Lock - 9 April 2026

5 Min Read

Regulated organisations face a clear opportunity: bring AI into the core of new products and customers can gain smarter, more flexible access to expertise at scale. Opening the door to new service models and strengthening market position in an AI-driven landscape.

Most organisations already recognise this. The bigger question is why so few are getting beyond the prototype stage.

In regulated environments, AI cannot simply be impressive. It has to be defensible. Decisions must be auditable, outcomes must be consistent, and the system must stand up to legal scrutiny. When the same question produces different answers, confidence erodes and momentum stalls.

Through our work with Lewis Silkin on Delphius and Lettu, we have seen what separates the businesses that move forward. They embed domain expertise directly into how their AI products are developed, tested and scaled. Used properly, that expertise makes outputs consistent, explainable and trustworthy enough to reach real users.

What follows is the framework we use to help organisations move AI products from promising prototypes into production with confidence.

Traditional testing doesn’t work with LLMs

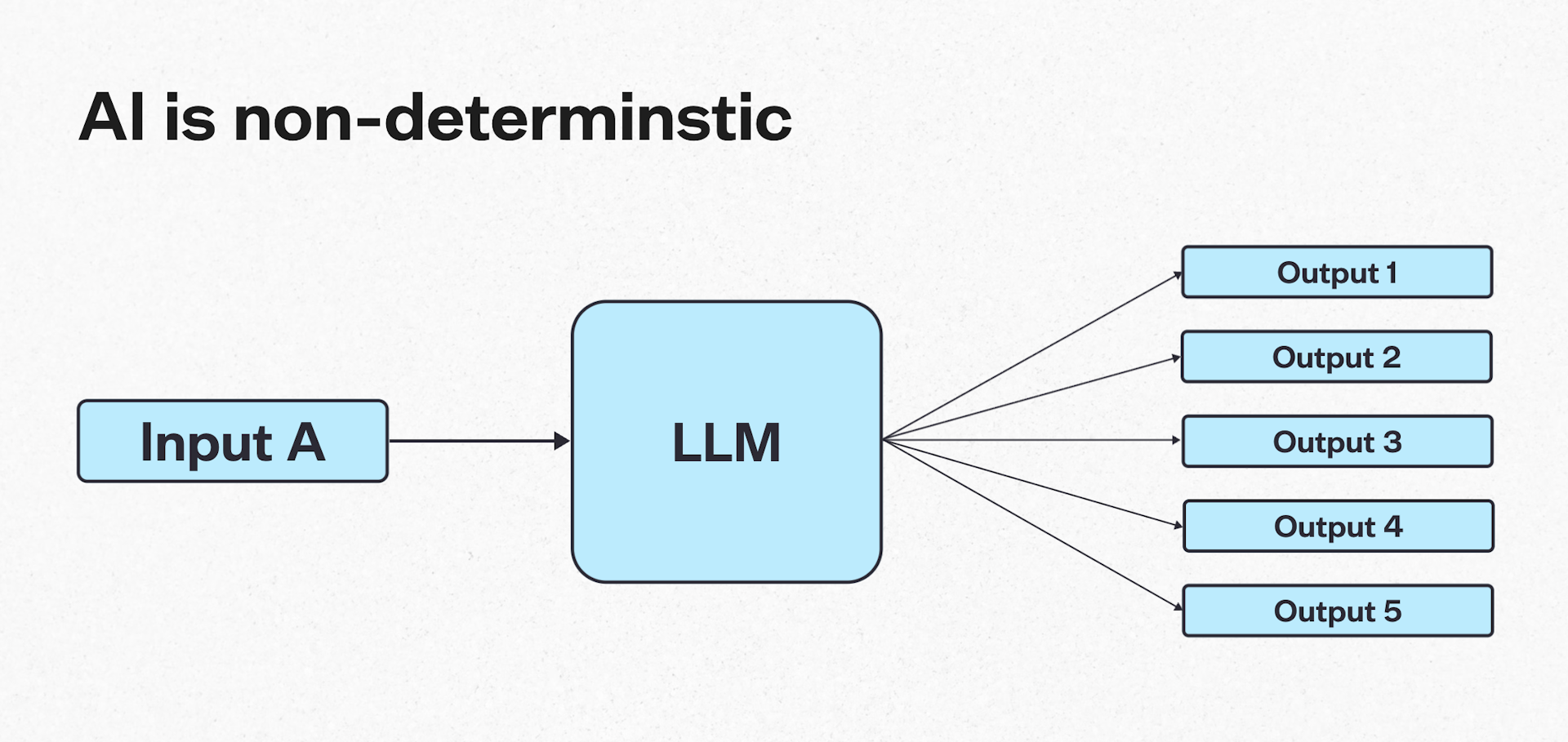

Large language models are non-deterministic by design, meaning the same prompt can produce different outputs.

In regulated environments, that variability introduces a new class of risk. Decisions in healthcare, legal and financial services hinge on producing outputs that align with established standards, accepted interpretations and defensible trade-offs. Ultimately, the business has to be confident every answer can stand up to scrutiny.

Traditional software quality assurance assumes determinism. You define an expected output, assert against it and treat deviations as failures. That approach collapses when there is no single correct answer.

As a result, teams get stuck, unable to trust AI to produce outputs the business can stand behind.

Eval-driven development

So if traditional software testing does not hold up, how do you build AI products a business can stand behind?

This is where eval-driven development comes in.

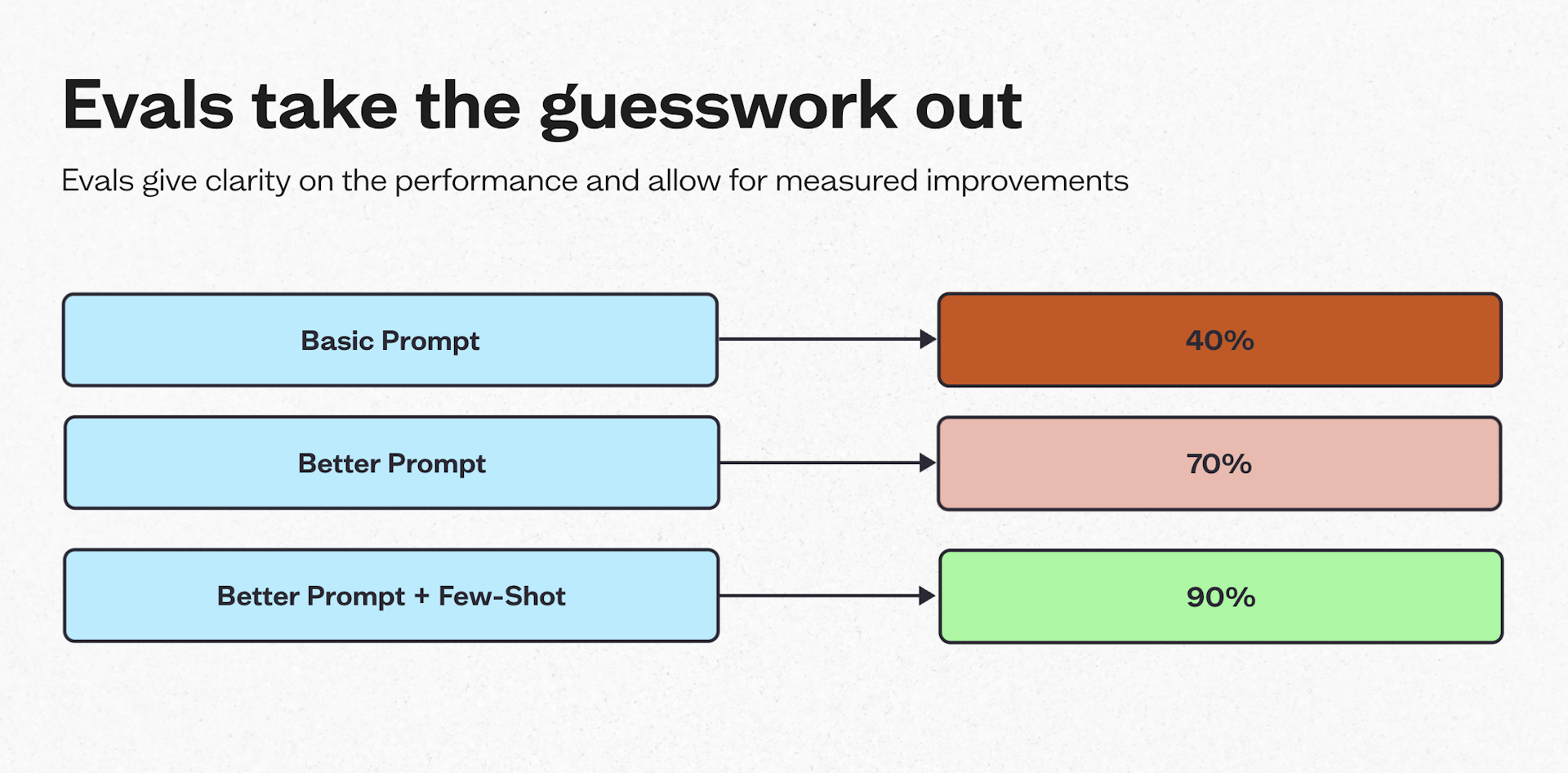

Evaluations, or evals, act as end-to-end tests for AI behaviour. They measure output quality against defined criteria using a combination of code-based grading, expert review and, where appropriate, LLM-based judging to assess whether the system is meeting your standards.

Crucially, evals give teams clear visibility into performance throughout the development process, allowing improvements to be measured and guided with confidence.

Our framework: using expert judgement as product infrastructure

Reliable AI workflows are built by treating domain expertise and evaluations as technical infrastructure, rather than as governance layers or post-hoc checks.

This framework has two distinct but tightly linked steps.

Step 1: surface and document domain expertise

Most expert judgement is tacit. Lawyers, clinicians, risk analysts and compliance specialists make decisions by weighing signals, thresholds and trade-offs that are rarely written down.

The first step in building AI outputs a business can trust is to make that expert judgement explicit.

In practice, this means bringing domain experts in early to define what “good” looks like. Teams create a playbook that surfaces implicit rules and identifies the boundaries beyond which outputs are no longer defensible or compliant.

But it’s not just about capturing outcomes. It is about capturing reasoning: why certain factors matter more than others, how risk is prioritised, and where professional judgement must be applied.

Step 2: measure output quality through evals

Once “good” is clearly defined, developers take that expertise and build evals.

These evals allow teams to measure the system against agreed parameters. Instead of relying on spot checks to catch output issues, teams can detect problems early and consistently.

From here, evals become part of the core development loop. They run on every meaningful change to the system, giving teams a clear signal of whether AI behaviour is strengthening or slipping below agreed standards.

This creates a disciplined cycle of measurement and refinement. Teams review where outputs move out of bounds, identify gaps where eval coverage is missing, and expand accordingly. Prompts are adjusted, architecture evolves, guardrails are tightened, and as the system scales it moves towards the level of consistency and accountability required for use in regulated environments.

Case study: legal contract review

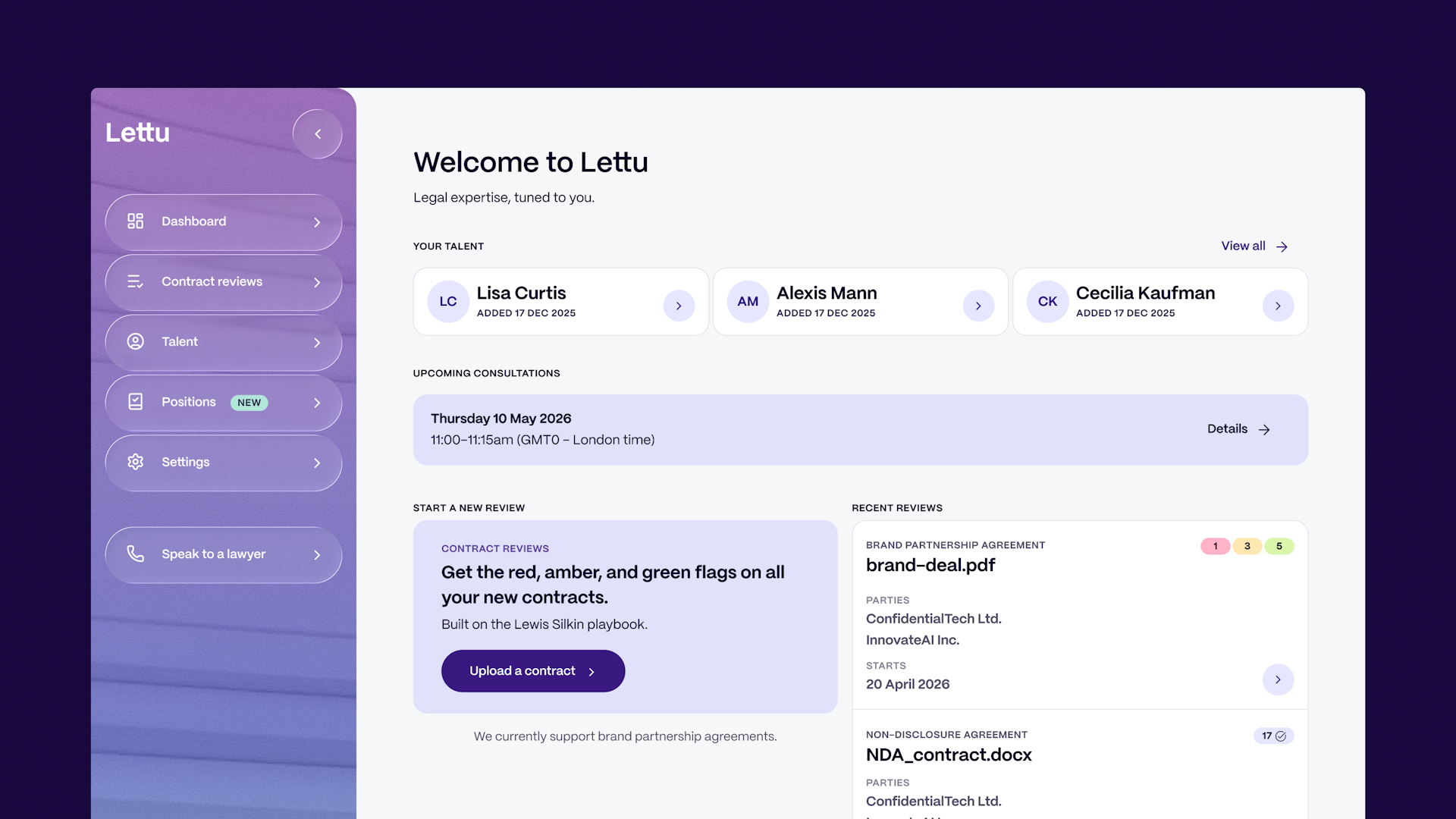

In collaboration with Lewis Silkin, we designed and launched Lettu, an AI-powered legal platform that brings expert contract analysis directly to creators and talent managers.

Users can upload agreements and quickly understand key obligations, restrictions and areas of concern, explained in clear, practical language.

But making that possible meant turning legal judgement into something the system could evaluate reliably. We worked closely with lawyers to surface how contracts are reviewed in practice, then used that knowledge to define the evaluation framework that now guides the product.

Step one: capturing legal judgement

The initial work focused on surfacing legal judgement. Lawyers defined required clauses, risk classifications, escalation thresholds and negotiation boundaries, along with the reasoning behind those distinctions.

Key to this was mapping how contracts are reviewed in practice: what lawyers look for first, how issues are identified and prioritised, and how findings are presented back to clients.

Step two: evaluating against real work

This knowledge was then structured into a playbook that acted as the system’s source of truth.

Evals were built and tested against real contracts, with expected outputs defined by the playbook. Scoring went beyond simple detection to cover clause identification, risk categorisation and the quality of the reasoning used to justify each assessment.

The outcome

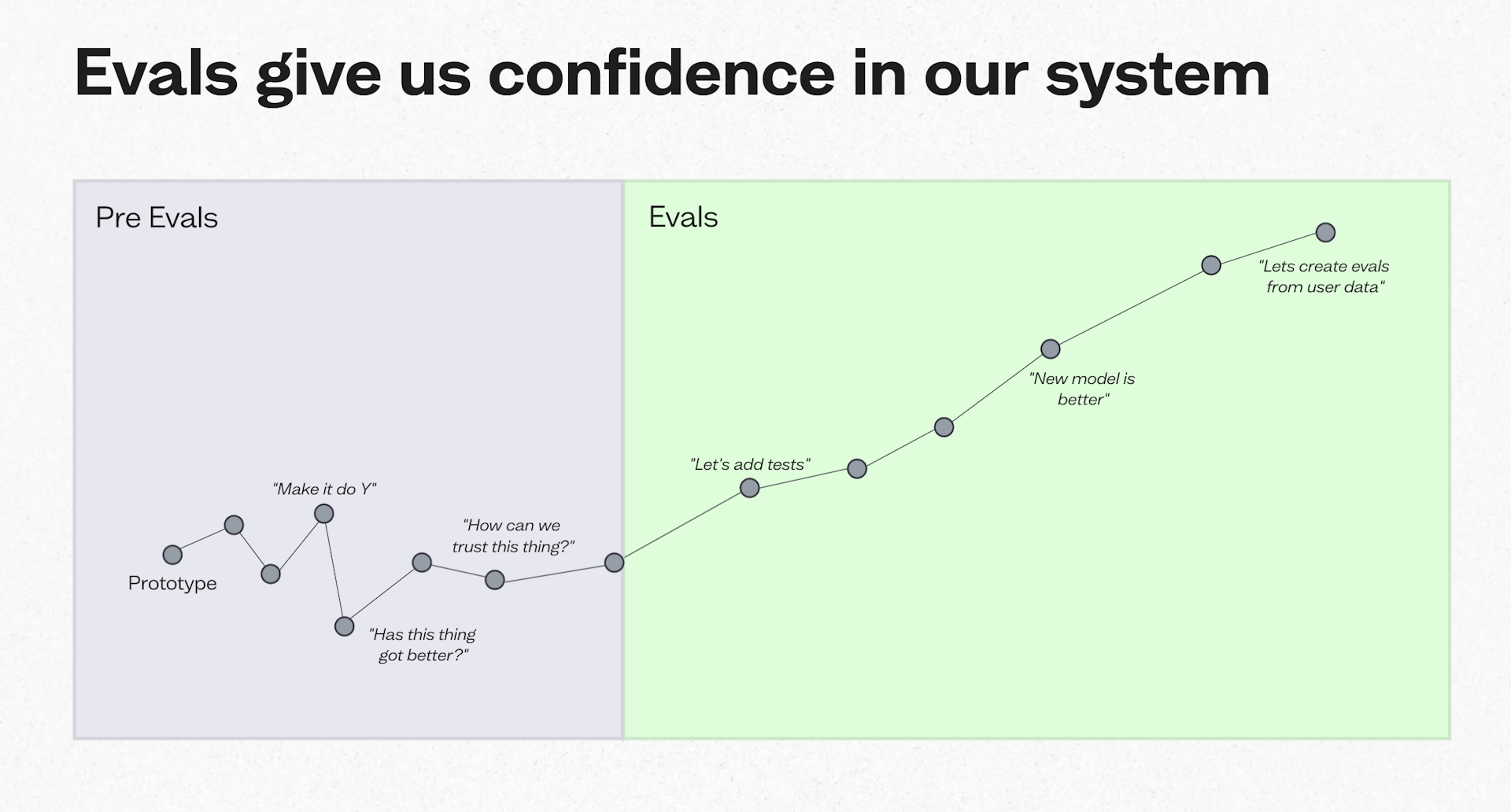

This approach allowed the team to scale safely. Changes to prompts, architecture or models could be evaluated against a stable baseline of expert judgement, giving the team clear visibility into system performance throughout development.

Regressions were caught early, behaviour remained consistent as the system evolved, and the team could move forward with the confidence needed to launch the product.

Expertise is your competitive edge

As AI capability continues to advance, the bottleneck in regulated domains is no longer access to technology. It is the ability to turn probabilistic systems into tools that behave consistently, transparently, and defensibly under real-world constraints.

Product development itself has not fundamentally changed. What has changed is where testing sits in the process.

In regulated AI systems, testing can no longer be something that happens at the end. It needs to be designed in from the start, using domain experts to define quality and evaluations to continuously enforce it.

The teams that succeed will be the ones that can move AI systems into production by staying close to their true competitive advantage: their expertise.